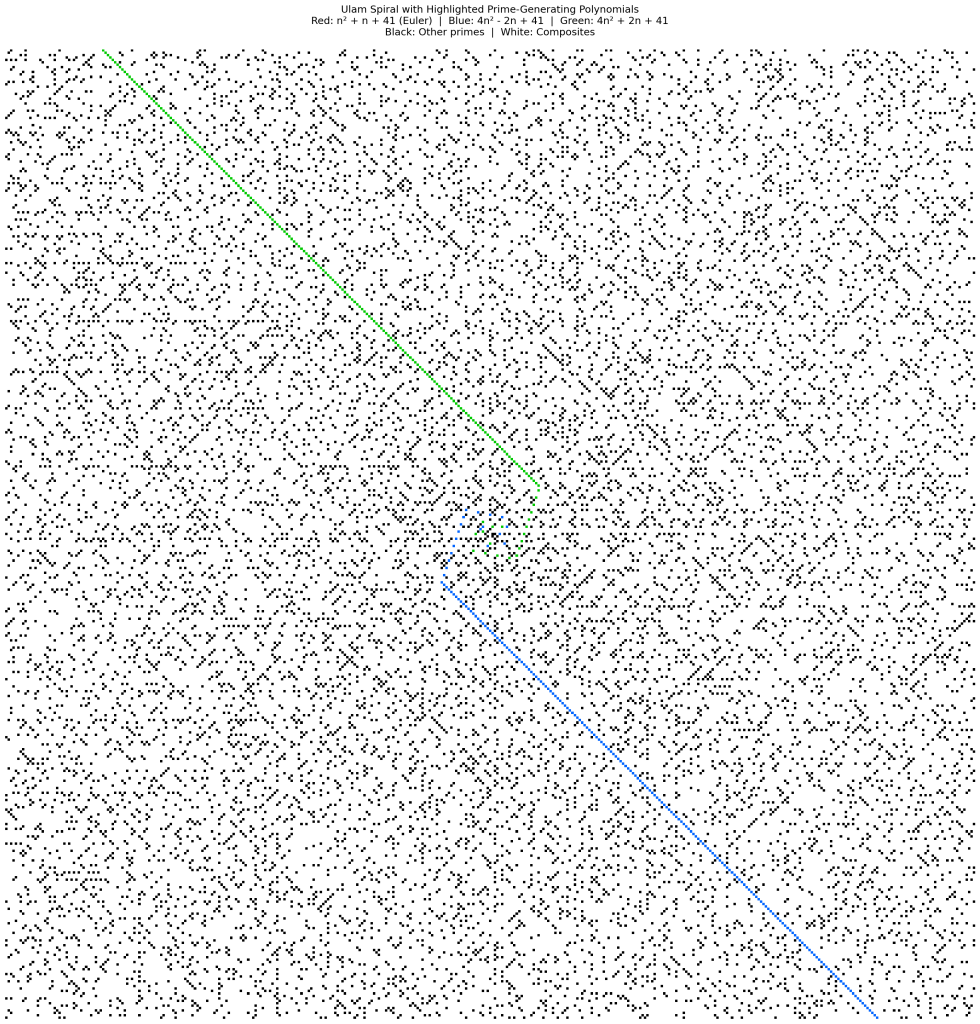

In 1963, Stanisław Ulam was stuck in a boring lecture, so he started writing integers in a spiral. When he circled the primes, they stubbornly clustered into diagonal streaks.

I recently lost a weekend trying to build a tool to subtract that obvious structure just to see what was hiding underneath. At the time, my feed was choked with hot takes about “vibe coding”—the idea that having an AI write your code turns you into a helpless spectator who inevitably ships fragile garbage.

I decided to test the math and the hot takes at the same time. I fired up Claude, OpenAI, Grok, and a few local LLMs, and started building Prime_Plot_AI. The goal wasn’t to magically discover prime secrets. It was to see what happens when you use these models to sprint into a domain where you know just enough to build the tools, but not enough to fly blind.

I expected a quick weekend project. I was wrong on multiple counts.

The Engine

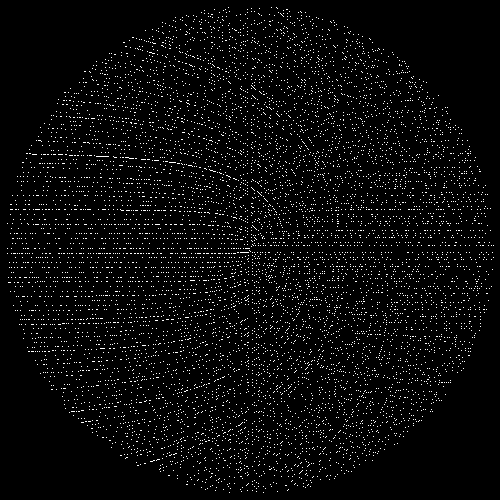

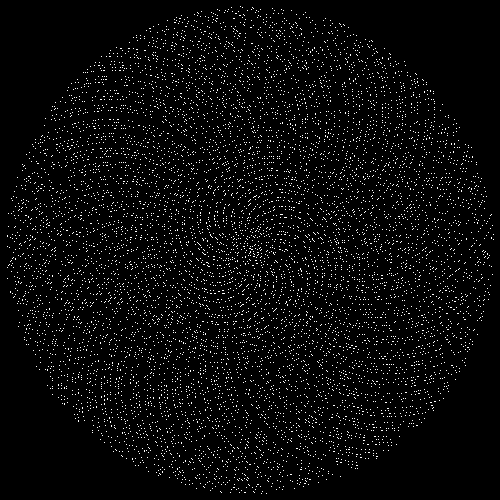

Prime_Plot_AI wasn’t built to be an “AI that discovers prime secrets.” It’s a brutally practical system that loops through three steps: It renders prime distributions using different mappings (spirals, shells, modular plots). It detects structure with pattern-finding tools (directional convolution, Hough lines, FFT/Gabor-ish texture detectors). And it subtracts what we can explain so we can stare at the leftovers without fooling ourselves.

The important part isn’t the AI. The important part is the loop. It forces you to write down what you think you know, formalize it, and let the output embarrass you when your assumptions are wrong.

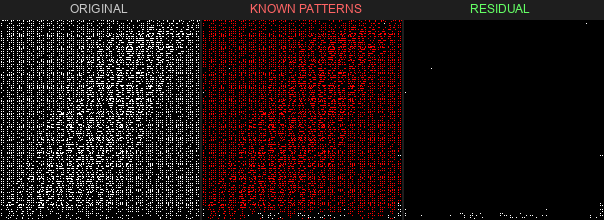

Most of the work revolved around a three-panel visualization: the raw prime density, the structure we can explain (polynomial families, modular constraints), and the residual—what’s left when you subtract known structure from observed structure. In theory, these panels should conserve mass.

In practice, that brings us to the bug.

When the Normalization Lies

Halfway through the project, I realized my three-panel visualization was feeding me garbage.

The raw panel showed dense patterns. The known patterns panel showed almost nothing. The residual panel showed almost nothing. They didn’t add up visually. If the panels don’t conserve, I’m not doing discovery—I’m generating decorative nonsense.

The bug was stupid. When normalizing grids (dividing by max count so values range 0–1), a pixel with one prime might end up with a value like 0.33. My code used a threshold of > 0.5 to decide if a cell “contained primes.” The display logic was literally erasing real primes because they weren’t bright enough.

Fixing it was easy. Accepting the operational lesson was harder.

If you’ve ever dealt with a security tool that showed green dashboards while the network was on fire, you know the sensation. Clean visuals, zero signal. When an LLM generates your code, you don’t get to stay ignorant. The instant the output looks wrong, you either understand the math well enough to debug it—or you’re just watching lights blink.

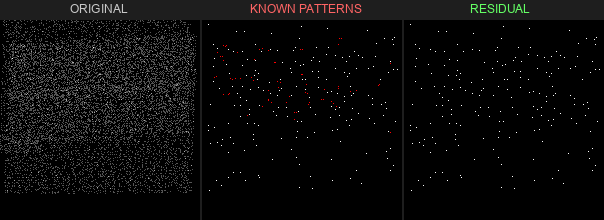

What Shook Out

Once the bug was dead, the system started telling a more honest story. Here are a few of the mappings it explored early:

The obvious structure is just math doing math

The diagonal lines in the Ulam spiral are largely explained by quadratic polynomials of the form 4n^2 + bn + c. The modular grids are residue class constraints. The golden-angle spirals are geometry doing exactly what geometry does. The tool kept rediscovering basic number theory from first principles. If you build a pattern detector and it doesn’t find the patterns mathematicians have already catalogued, you have a broken detector.

Structure is leverage

Once you can label the obvious structure, you can stop searching the entire haystack. I ran a benchmark: how much faster can we find primes if we stop being naïve?

| Method | Improvement vs Brute Force |

|---|---|

| Polynomial-First | 1.8x |

| Modular Density | 1.37x |

| Stacked (Combined) | 1.46x |

Nobody’s cracking the Riemann Hypothesis with a 1.8x speedup. But in security terms, this is the difference between scanning your entire network versus scanning the segments you know are exposed. Same work, better targeting. Structure you can measure is structure you can exploit.

The residuals are the point

Subtract what you can explain, and the leftovers get interesting. Sometimes it’s noise. Sometimes the tool is politely telling you that you don’t even know what question you’re asking yet.

I’m not going to pretend this is a smoking gun. But it’s a map of what we’ve explained and what we haven’t.

AI Speeds Up the Ignorance Loop

So, does leaning on Claude, Grok, or local models to write your code make you dumber?

It can—if you treat the APIs like a black box that just prints answers. I’ve seen this exact anti-pattern in security for 20 years: people deploy a shiny new tool, blindly trust the green dashboard, and stop looking at the raw packets. The tool becomes a comfort blanket instead of a force multiplier.

But if you use these models to accelerate into harder problems, the opposite happens. You hit the wall of your own ignorance a lot faster. The AI wrote plenty of the code for this project, but when the visualizations were subtly lying to me, I still had to debug the math. It’s a force multiplier, not an autopilot. Take the human out, and you just end up generating pretty pictures that don’t conserve mass.

C99 Soul vs. Rust Velocity

While I was building Prime_Plot_AI, two friends from the security community went down their own prime rabbit holes. The contrast is too perfect to ignore.

Tom Liston (@tliston) built pi_sieve: a hand-crafted C99 prime sieve with platform-specific SIMD intrinsics and wheel factorization. It saves progress to disk, survives crashes, and features a meditative terminal display that scrolls primes against a “Time Running” header. It is a human being hand-tuning assembly to do one thing beautifully, forever.

Dragos Ruiu (@secwest) saw Tom’s project and responded with fast-prime: a Rust-based toolkit built with heavy AI assistance. The V3 Meissel-Lehmer implementation counts all primes up to one trillion in 0.168 seconds on a single thread. The repo includes three OPTIMIZATIONS.md files documenting every aggressive algorithmic pivot attempted.

Tom wrote artisanal assembly by hand, optimizing for persistence and craft. Dragos used AI to iterate through sophisticated algorithms at a blistering pace, computing in fractions of a second what Tom’s sieve will meditate on for hours.

If you want the real-world version of my AI coding verdict, look at those two repos. The hand-built version has soul. The AI-assisted version has speed. The interesting question isn’t which one is better. It’s what happens when you bring both instincts to the same problem.

The Code

The full project, including the visualization engine and discovery framework, is on GitHub:

If you find a real residual pattern that holds up under subtraction, I definitely want to see it.

Leave a comment