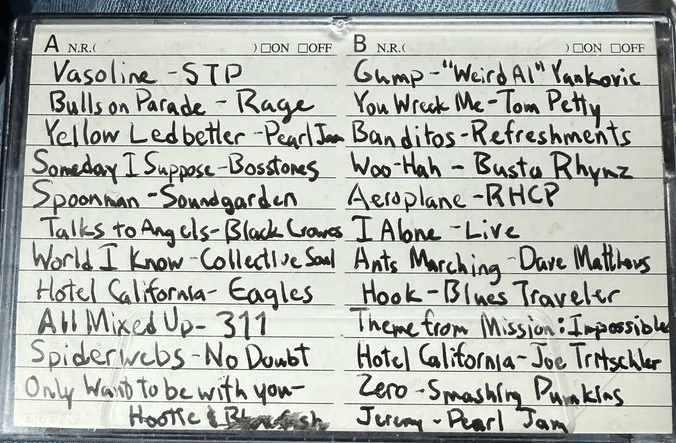

It started with a picture of a cassette case.

Someone posted it on social media, a handwritten track listing, both sides, the kind of careful block lettering you only see from someone who really cared about their mix. Stone Temple Pilots. Soundgarden. Rage Against the Machine. Pearl Jam. The full mid-90s rotation, twenty-three tracks deep.

I looked at it and felt something between nostalgia and compulsion. I wanted to hear this mix. Not a Spotify approximation, the actual songs, in order, played from my own library. But I was not about to type twenty-three artist-title pairs into a search bar one at a time like some kind of animal.

So I fed the photo to an AI and asked it to read the track list. Seconds later I had a clean M3U8 playlist, every track formatted as Artist – Title with a filename. Twenty-three entries. The easy part was done.

The hard part was the other end of the equation.

The 85,000-File Problem

My iTunes library is a sprawl. Eighty-five thousand files organized by Artist, then Album, then track number and title. Different extensions depending on when I ripped or bought the thing, mp3, m4a, flac, aac. The playlist says Soundgarden – Spoonman.mp3. The file on disk lives two directories deep with a track number prefix and a different extension. Nothing lines up.

I needed a resolver. Something to take each line of the playlist, search the whole library, and find the right file.

I was not going to write this by hand either.

Build Fast, Match Wrong

I pointed Claude Code at the problem and let it rip. Within minutes I had a working script, an M3U parser, a file indexer that walks a root directory and catalogs every music file, and a fuzzy token matcher that breaks filenames into words and looks for overlaps.

First run. Things matched.

They matched terribly.

Rage Against the Machine resolved to Bikini Kill. The Black Crowes, also Bikini Kill. Collective Soul, Bikini Kill again. Hootie and the Blowfish, you guessed it. Four completely different artists, four completely different songs, one very confused punk band getting credit for all of them.

Blues Traveler’s “Hook” matched to Billy Cobham’s “Mushu Creole Blues.” Because the word “blues” appeared in both. “Live – I Alone” matched to the Brooklyn Tabernacle Choir’s Live … Again because the word “live” was in the album title. Smashing Pumpkins found the right artist but the wrong song entirely.

The fuzzy matcher had no concept of which words mattered. “The” counted as a match. One overlapping token was enough to declare victory. It was less a resolver and more a random track generator with delusions of competence.

The Overcorrection

Round two was surgery. I had Claude add stopword filtering to kill common words that carry zero signal. A minimum of two meaningful token overlaps before even considering a match. A title coverage gate. An artist mismatch cap. Jaccard similarity instead of raw overlap.

The false positives vanished. Every single one. Bikini Kill went back to their lane. Billy Cobham kept his blues to himself.

But I’d swung the pendulum too far. Eighteen out of twenty-three tracks went unresolved. The gates that killed the false positives also killed the real matches.

Precision achieved. Recall destroyed.

The Data Was in the Files All Along

The resolver had been building candidate strings from the album folder and filename, so for Spoonman, it was working with “Superunknown 08 Spoonman.” The artist name lives one directory higher: Soundgarden. That word never entered the comparison. A query for “Soundgarden – Spoonman” overlapped on exactly one token and got bounced by the minimum.

Same story for single-word titles. “Hook.” “Zero.” “Gump.” “Spoonman.” Each has one meaningful token after normalization. Even if you fix the directory problem, the two-token gate kills them because the title can only contribute one match.

But here’s the thing, iTunes files have ID3 tags. Embedded metadata with clean artist and title fields. Not inferred from directory names. Not mangled by filesystem conventions. The library already knew that 08 Spoonman.mp3 was by Soundgarden with the title “Spoonman.” I just wasn’t asking.

The fix was mutagen, a Python library that reads tags from basically everything. One call per file. Read the artist. Read the title. Compare them directly against the query fields. When you’re matching “Soundgarden” against an artist tag and “Spoonman” against a title tag, you don’t need a two-token minimum. The precision comes from the structure, not the volume.

I also added the grandparent directory to the path-based fallback and relaxed the minimum for single-token titles. Belt and suspenders.

Two Ghosts on the Way Out

The metadata strategy worked beautifully on the first run, except for two tracks that shouldn’t have matched at all.

“Live – I Alone” resolved to some file called “COUNTING THE STARS ALONE” by an unknown artist. The word “alone” appeared in both titles, and the artist cap didn’t fire because the candidate file had no artist metadata at all. An empty string evaluated as falsy in Python and short-circuited the safety check. One condition change fixed it.

“She Talks to Angels” by The Black Crowes resolved to “Hard to Handle”, right artist, completely wrong song. The file happened to live inside an album folder called “She Talks to Angels.” The resolver matched the query title against the directory name, not the filename. Album names are not song titles. A gate requiring at least one title token in the actual filename fixed that one.

Both bugs had the same shape: a guard that looked correct until edge-case data slipped through sideways.

22 Out of 23

Final run. Twenty-two of twenty-three tracks resolved, all via metadata, all correct.

The one holdout, Joe Tritschler’s “Hotel California”, isn’t in my library. That’s not a matching failure. That’s an inventory problem. I found a copy of the album on eBay. Just waiting for it to arrive.

Every track that had been unreachable in round two came back. Soundgarden. Pearl Jam. Blues Traveler. No Doubt. Dave Matthews Band. Smashing Pumpkins. 311. Weird Al. Hootie and the Blowfish. Single-token titles. A numeric artist. Special characters. Compilations. All resolved cleanly.

The cassette mix sounds exactly as good as I hoped.

The Shape of the Thing

There’s a narrative about AI-assisted coding that goes something like: describe what you want, the AI writes it, you ship it. One shot. Clean.

This project went nothing like that.

It went: build a thing, run it, watch it fail in ways you didn’t predict, understand why it failed, fix that, run it again, discover a different failure mode, fix that too. Three rounds. A false-positive meltdown followed by a false-negative overcorrection followed by a metadata breakthrough followed by two edge-case cap bugs that only surfaced against real data.

The AI didn’t solve the problem. The AI accelerated the loop. Each round of investigation and implementation happened in minutes instead of hours. The iteration speed was the superpower, not some mythical first-try accuracy.

That’s the real shape of vibe coding. Not a straight line from prompt to product. A tight spiral of build, break, understand, fix. The cassette tape took longer to photograph than any individual round of coding took to execute. But the thinking between rounds, the failure analysis, the wait, what if I just read the tags moment,that was mine.

If you’ve got a photo of a mixtape and a library full of files, point an AI at both ends and meet in the middle.

Leave a comment